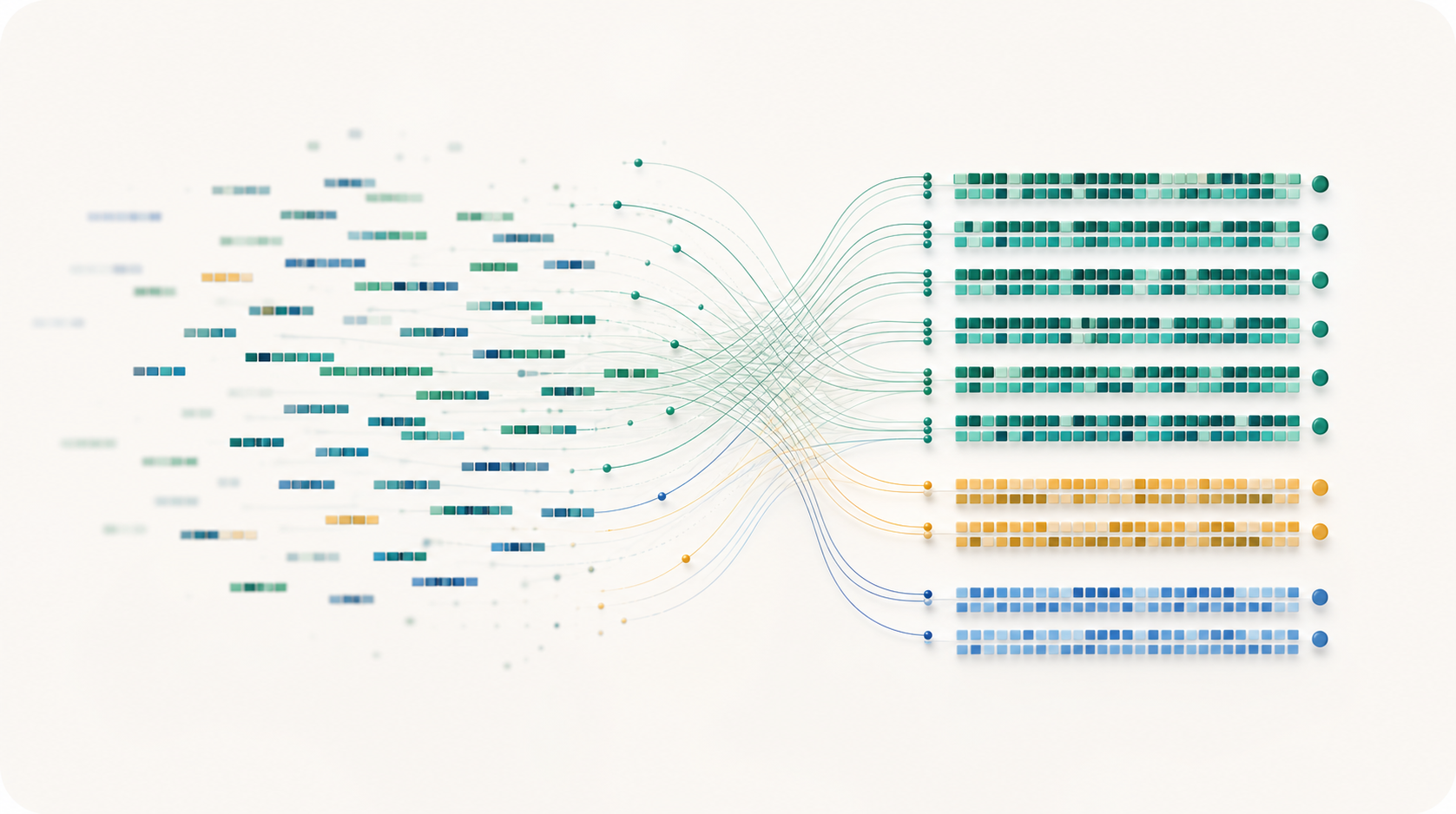

Mean throughput on repeated public MAGeCK/Yusa CRISPR rows.

DotMatch

Auditable assignment of short sequencing reads to known DNA targets.

Count CRISPR guides, split inline barcodes, and match short DNA reads to fixed target lists with exact, one-mismatch, and one-base indel rescue. Ambiguous reads are reported, not guessed.

Best-supported today: CRISPR guide counting from public MAGeCK/Yusa FASTQs, with MAGeCK-compatible count matrices and checked benchmark artifacts.

Peak memory for the repeated DotMatch Hamming and exact lanes.

Independent edit-distance validation over 2,000 checked reads.

The current MAGeCK/Yusa guide library in the checked benchmark.

The public CRISPR evidence in plain English.

We are keeping the claims narrow for v0.1.0. On repeated public MAGeCK/Yusa CRISPR guide-counting rows, DotMatch Hamming k=1 processed about 331k reads/s using about 28.7 MB peak memory; guide-counter processed about 195k reads/s using about 529 MB, and MAGeCK exact count processed about 93k reads/s using about 159 MB.

The Yusa rows are in the repo.

These rows are not a leaderboard. They are the first public case we can rerun and inspect: five 100k-record/sample repeats for DotMatch, MAGeCK, and guide-counter, with exact, Hamming, and Levenshtein kept separate. Edlib validation checks 2,000 reads with zero mismatches against an independent edit-distance implementation.

k=1 Levenshtein usually checks only a few candidates.

On the public Yusa rows, the index sends about 2.822 candidate targets per read to exact verification, out of an 87,437-guide library. In biology terms, that lane allows one substitution, insertion, or deletion.

The CRISPR counter stays small.

The repeated Yusa runs put DotMatch Hamming and exact lanes around 28.7 MB peak memory use. guide-counter is around 528.7 MB on the same fixture.

Comparator counts are useful, but not oracles.

MAGeCK and guide-counter help us compare familiar workflows. Correctness is checked against exhaustive assignment and Edlib, not whichever external tool happens to agree.

Use it when assignment choices matter.

Most DotMatch jobs start as FASTQ reads and a target table. The point is not only speed; it is making corrected, ambiguous, and unmatched reads visible enough to audit.

Use DotMatch when you have

- CRISPR guide-counting FASTQs

- inline barcode reads

- known primer, panel, or whitelist targets

- classic per-cycle BCL demultiplexing jobs

DotMatch gives you

- one assignment per read

- explicit ambiguous and unmatched reads

- one-base mismatch or indel rescue

- MAGeCK-compatible count matrices and QC tables

Do not use DotMatch for

- genome alignment or variant calling

- SAM/BAM/CIGAR output

- downstream CRISPR screen statistics

- CBCL/NovaSeq demultiplexing or wildcard N semantics

a fixed guide, barcode, primer, whitelist, or panel sequence list

allow one mismatch, no indels

allow one substitution, insertion, or deletion

reads that match multiple targets are reported, not forced into a guide or barcode

peak memory use

checked against an independent edit-distance implementation

One CRISPR run, from FASTQ to QC.

This is the practical shape of the best-supported workflow: reads in, a guide-by-sample count matrix out, and a small set of QC files that say what happened to every assignment class.

dotmatch crispr-count \

--library yusa_library.csv \

--samples samples.tsv \

--guide-start 23 \

--guide-length 19 \

--k 1 \

--metric levenshtein \

--indel-window 1 \

--out counts.mageck.tsv \

--summary qc.json \

--report report.htmlcounts.mageck.tsvguide x sample count matrixqc.jsonexact, rescued, ambiguous, and unmatched readsreport.htmlarchived run reportRead: ACGTACGT

Guide A: ACGTACGA distance 1

Guide B: ACGTACGC distance 1

Some tools may pick or double-count.

DotMatch reports: ambiguousAmbiguous reads are not silently counted into a guide or barcode. They stay available for QC and diagnosis.

Start from the repo. Cite the exact release.

Use the source install until the public package channels finish publication. Current distribution: source install, release artifacts, and a Bioconda recipe PR with CI green. Coming next: PyPI, Bioconda merge, Docker/Singularity, Zenodo DOI.

Clone the repo and run the release check.

git clone https://github.com/dnncha/dotmatch.git

cd dotmatch

make

python3 -m pip install .

make repository-readyUse the release citation and a matching methods sentence.

If DotMatch helps an analysis, cite the software release. The methods note has short wording for CRISPR guide counting, one-edit Levenshtein rescue, and Hamming-only comparisons.

The main public comparison is deliberately narrow.

The public CRISPR benchmark is the best-supported comparison today: Yusa-style guide counting, checked-in rows, and validation against the assignment oracle.

Who this is for.

The same engine serves a few different readers. The strongest adoption path today is CRISPR guide counting, but the audit trail is useful anywhere short reads must land on a fixed target list.

CRISPR screen users

Count guides from FASTQ/FASTQ.gz into MAGeCK-compatible matrices, with exact, rescued, ambiguous, and unmatched reads in the QC.

Sequencing cores

Demultiplex fixed-position inline barcodes while keeping ambiguous and unmatched reads available for review.

Bioinformatics developers

Use the C core, CLI, Python bindings, schemas, validation commands, and raw benchmark artifacts.

Methods reviewers

Inspect the claim gates, raw CSVs, exact commands, and validation against exhaustive or Edlib checks.

What is validated, early, or out of scope.

CRISPR guide counting is the strongest public evidence today. Other surfaces are useful, but the site keeps smoke tests and future distribution work separate from the primary evidence.

The fast path is tested against the native exhaustive scan for the same targets, error allowance, and ambiguity policy.

Guides, barcodes, primers, adapters, panels, and whitelist-style sequences where the candidates are already known.

Core code, bindings, tests, scripts, reports, schemas, and raw benchmark tables live in the repo.

Command-line first.

DotMatch is a small C/Python tool with a CLI and Python ctypes bindings. Runs can write count matrices, FASTQ splits, QC tables, assignment diagnostics, audit files, validation summaries, and self-contained HTML reports.

$ dotmatch crispr-count --library guides.csv --samples samples.tsv --guide-start 23 --guide-length 19 --k 1 --metric levenshtein --indel-window 1 --out counts.mageck.tsv --summary qc.json$ dotmatch count --targets guides.csv --reads sample.fastq.gz --target-start 23 --target-length 19 --k 1 --metric levenshtein --indel-window 1 --report report.html --sample-qc sample_qc.tsv$ dotmatch demux --barcodes barcodes.tsv --reads pooled.fastq.gz --barcode-start 0 --barcode-length 8 --k 1 --metric hamming --out-dir demuxed --summary demux.qc.json$ dotmatch bcl-demux --run-folder 240101_RUN --sample-sheet SampleSheet.csv --out-dir bcl_demuxed --barcode-mismatches 1 --summary bcl.summary.json$ dotmatch audit --targets guides.tsv --k 1 --out-dir audit$ dotmatch inspect-unmatched --targets guides.tsv --reads sample.fastq.gz --target-start 23 --target-length 19 --k 1 --offset-window 2 --top 100 --out top_unmatched.tsv$ dotmatch validate --targets guides.tsv --reads sample.fastq.gz --target-start 23 --target-length 19 --k 1 --indel-window 1 --oracle edlib --sample 100000For short reads with known targets and real QC stakes.

Use DotMatch when exact one-edit assignment matters, when ambiguous or unmatched reads are as important as the counts, and when another lab should be able to inspect how the calls were made.

Review the evidence